Intelligence at the Edge: Why AI can't wait on the cloud

How mission-proven edge AI enables decisions when connectivity fails

Three Points to Remember

Adaptive Edge brings mission-ready AI directly onto sensors and platforms, enabling real-time decisions despite power, size, and connectivity constraints.

Collaborative autonomy framework and extension (CAFE) acts like a traffic controller, designed to help the right data reach the right system at the right time to sustain mission execution in degraded or contested networks.

Together, Adaptive Edge and CAFE form a resilient edge AI ecosystem proven in real missions. From contested airspace to disaster response.

Why mission AI has to move beyond the cloud

Imagine a search-and-rescue drone sweeping a collapsed building after an earthquake. Onboard AI analyzes thermal imagery in real time, working to distinguish human heat signatures from debris and background noise. The system identifies a high-probability survivor and autonomously adjusts the drone’s flight path to get a closer look.

In moments like these, AI can’t wait on the cloud. It has to move closer to the mission, onto the edge-computing platforms used by operations teams and autonomous equipment on the front line. This is because centralized, cloud-based AI models require reliable connectivity, sufficient bandwidth, and time to move data back and forth, conditions that often don’t exist in disaster zones or other disrupted environments.

From cloud dependency to mission reality

Through projects like AlphaMosaic, Leidos has worked with AI at the edge, in environments where bandwidth is limited and connectivity cannot be assumed. These systems must sense, decide, and act in real time, often without reliable reach-back to centralized computing.

That operational challenge reveals a simple truth: edge AI isn’t just about running models on smaller hardware. It’s about deciding what information matters, where it should live, and how it moves when networks are constrained.

To solve that challenge, Leidos approaches edge AI as an integrated system rather than a single technology. Adaptive Edge and the Collaborative Autonomy Framework and Extension (CAFE) were developed to support real-world missions by pairing local AI decision-making with coordinated information sharing across distributed platforms. Adaptive Edge determines what intelligence is generated locally and acted on immediately, while CAFE manages how essential insights are shared across platforms to support coordinated action even when connectivity is degraded or denied.

Making the edge intelligent, not overloaded

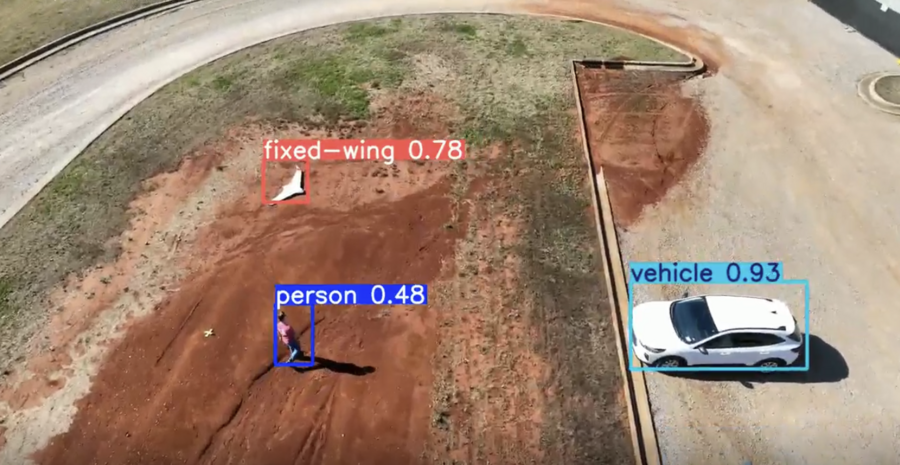

At the tactical edge, communication is one of the biggest constraints. Sensors, drones, vehicles, and handheld devices can generate more data than any network can support, especially in contested or remote environments. Without careful orchestration, limited bandwidth can quickly become a bottleneck.

Instead of sending everything back for processing, intelligent systems learn to recognize what matters most. An anomaly, a threat, or a deviation from normal behavior is elevated immediately. Routine or low-value data is handled locally.

This ability to prioritize information transforms edge platforms from passive collectors into active decision-makers, but modern missions rarely rely on a single platform acting alone. Most operations depend on diverse systems working as a team in real time.

Keeping teams in sync when networks aren’t

That’s where CAFE builds on the foundation Adaptive Edge creates.

CAFE manages how information moves across distributed systems, acting as a traffic controller for autonomous teams. It determines when, where, and how data is shared so that the most critical insights can reach the right system at the right time. Even when communications are jammed or intermittent, CAFE helps ensure platforms continue operating as a coordinated unit rather than isolated assets.

Together, Adaptive Edge and CAFE can enable intelligence not just at the edge, but across the edge.

Why the future of AI lives at the edge

Disaster zones, rural regions, and contested battlefields all share a common reality: the cloud can’t always be available. In these environments, resilience and autonomy must be built in from the start. By combining Adaptive Edge and CAFE, Leidos offers edge AI shaped by real mission experience, bringing intelligence to the device and designed to help critical mission information flow across the mission when it matters most.

AI will continue to reshape how we sense, decide, and act. But its real test will always be in the field, on the move, and under pressure. Adaptive Edge and CAFE together demonstrate that intelligence at the edge isn’t just possible, it’s essential. The only question is: how fast can we get there?