Why MLOps is critical for government AI

Short for 'machine learning operations,' it's syncing production, monitoring and governance to securely launch and continuously update ML models.

Why it matters

- AI is scaling fast in government, but trust determines whether it's actually used.

- MLOps provides the structure to deploy, monitor and govern AI safely at scale.

- Without MLOps, models degrade, risks grow and mission outcomes are at stake.

Government missions are bringing on AI-enabled capabilities at a rapid pace, making AI trust more crucial than ever. A key element of building trust is the capability to govern the production, deployment and improvement of AI solutions known as machine learning operations, or MLOps.

Thomas Boggs, Leidos’ director of AI and ML lifecycle engineering, describes MLOps as the implementation of people, processes, practices and technology tools to systematically take a machine learning model from concept to deployment and then through continuous monitoring, management and maintenance.

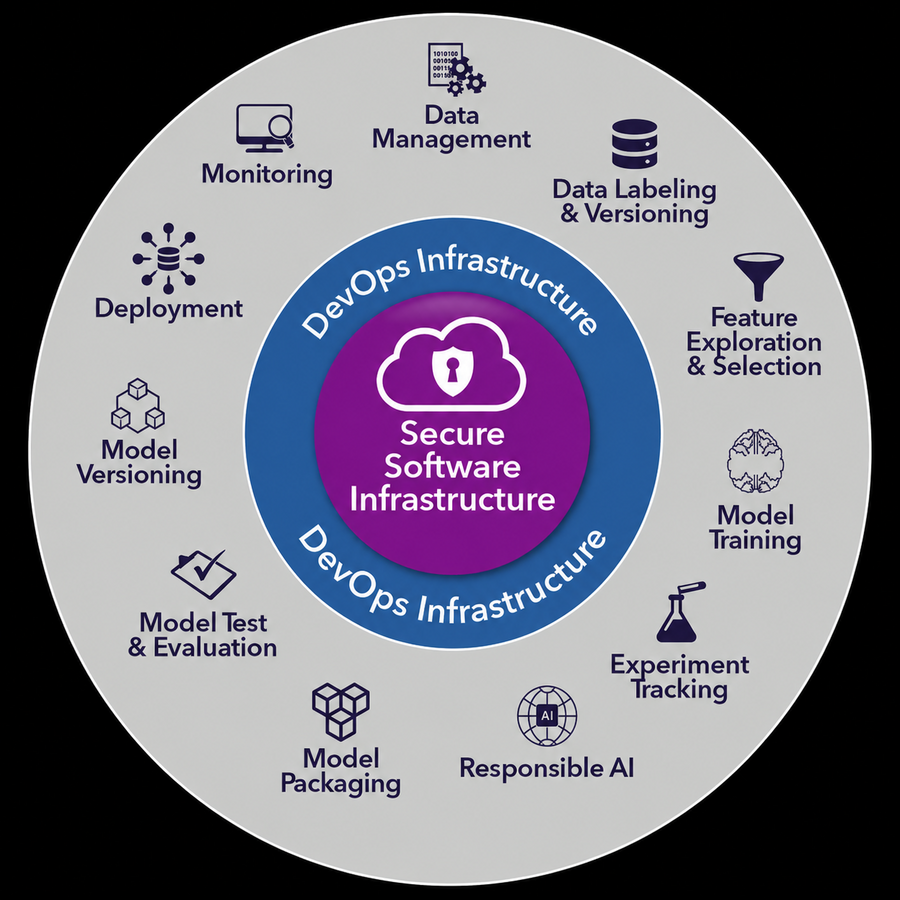

MLOps can create standardized, closed-loop AI engineering pipelines incorporating security and compliance processes. Foundational to MLOps is teams working in unison on integrated data science and IT operations toolchains with automation.

The fast-changing, real-world conditions that ML models encounter once they’re fielded are also driving MLOps. Without a rigorous and repeatable way to evaluate and assess the outputs by models, their performance could degrade over time and open up concerns about trust.

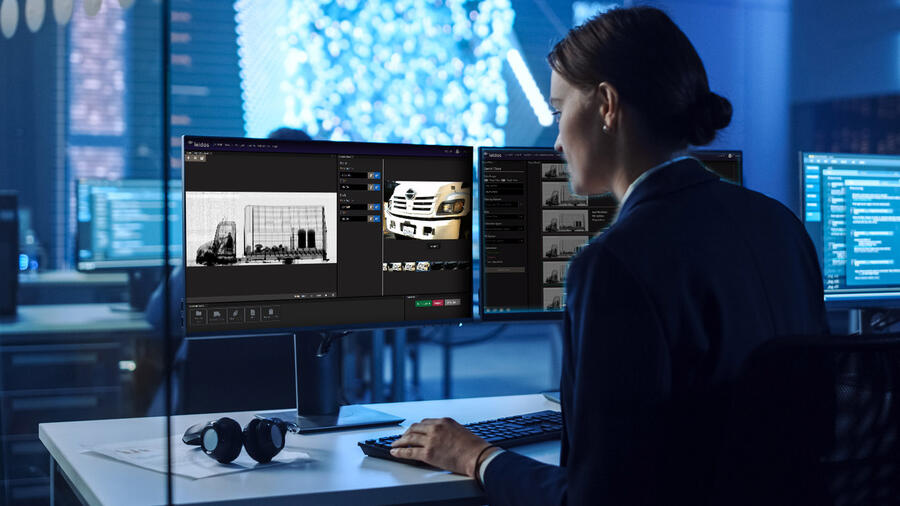

Leidos has a well-established approach for MLOps integration into government systems that is designed to be secure, repeatable and scalable, according to Tifani O’Brien, vice president of Leidos’ AI Accelerator. Wide-ranging customers with missions from border security and intelligence understanding to disability claims processing and network security operations have seen accelerated cycle times to field, update and deploy models.

“Through MLOps, when we deploy AI, we know we’re doing it safely, securely and at scale,” O’Brien said. “In doing this for years, we’ve learned that trust gets AI adopted.”

Through MLOps, when we deploy AI, we know we're doing it safely, securely and at scale. It's more than the continuous pipeline. It's the monitoring that ties into governance.

Tifani O'Brien

Leidos AI Accelerator Vice President

MLOps trains and monitors AI in ever-changing operational conditions

Taking a page from DevOps in software development, the continuous integration and delivery cycles can help address accuracy drift as well as constantly evolving safety and risk concerns that users have with AI.

For instance, ML models for automated threat detection at ports and borders need to be rapidly fine-tuned and redeployed every time they encounter new types of dangerous objects or, simply, new classes of commercial goods. Such occurrences could lead to inaccurate results for computer vision that is scanning from cargo containers to vehicles to air travelers.

“There are constantly new products being created and shipped — even the characteristics of things being shipped may change over time,” Boggs said, pointing to the evolution of push-button cellphones to touch-screen smartphones as one notable example.

He continued: “We need a feedback loop to apply new data to retrain models when we have new threats or products detected as false positives. We want to be able to adapt quickly.”

Large language models add twist to managing AI

With large language models (LLMs) proliferating, MLOps adoption becomes even more critical. Leidos uses monitoring and observability tools for LLM-based generative and agentic AI deployments to help identify issues such as hallucinations, drift and even data poisoning and manipulation.

“We need to have high confidence that they will perform as intended,” Boggs said of LLMs. “We want visibility into LLM development and operations processes to understand how and why a model came to certain decisions and to make sure we have hardened solutions.”

For general tasks and internal business functions, many agencies are using unmodified LLMs with observability tools to monitor how well they are assisting users. At the same time, fit-for-purpose models or customized LLMs are being built for sensitive and domain-specific applications, trained on or using only agency datasets.

By bringing development in house and putting models in MLOps environments with centralized tracking and repositories, agencies can work to establish process rigor and gain greater oversight and transparency that may contribute to AI adoption.

Leidos’ customer environments require protection of sensitive data and support different data and compute platforms. The company works with customers to deploy MLOps implementations in the cloud as well as in secure networks that use on-prem computing resources.

These implementations use Leidos-developed capabilities as well as commercially available and open-source tools, partner solutions and automation. The company builds tailored toolchains with playbooks and workflow templates intended to suit the customer’s environment and requirements.

We want visibility into LLM development and operations processes to understand how and why a model came to certain decisions and to make sure we have hardened solutions.

Thomas Boggs

Leidos Director of AI and ML Lifecycle Engineering

Intersecting MLOps and AI governance

The ability to monitor and audit models is a key requirement of many AI governance strategies. To help each agency customer stay aligned with regulatory and ethics guardrails, Leidos integrates its 4A framework, dialing in the appropriate level of AI and human interaction between analysis, assistance, augmentation and autonomy. The company uses its FAIRS toolset, which stands for Framework for AI Resilience and Security, to put the 4A framework into operation.

FAIRS tools analyze and seek to detect flaws and gaps in model-training data to help reduce the risk of biased and unintended outcomes. These tools also monitor LLMs for errant behaviors or user inputs that could signal adversarial attempts to induce harmful outputs or actions. These capabilities support Leidos’ MLOps implementations by providing the feedback used to revise models and evaluate new versions for improved behaviors.

One of the 4A framework’s major principles is collaboration between humans and AI. Leidos applies explainability tools and human-centered AI design, which prioritizes transparency and augmenting human abilities rather than replacing them, with the goal of providing users the information to continuously assess the trustworthiness of model outputs.

“Every government organization has its own set of AI governance requirements, but we’ve gone into a number of agencies to help develop strategies,” Boggs noted. “We triage every AI project and assess where the risks are and how to mitigate them.”

Through the combination of MLOps, the 4A framework and FAIRS tools, Leidos provides customers a full lifecycle model production infrastructure. This platform is designed to support a strong foundation for governance and trust, as they seek a reliable means of producing AI systems intended to be safe and ready to use.

Boggs reinforced that in the end, sound engineering and governance practices as well as team collaboration are crucial for scaling up and maturing AI operations.

“In many cases, agencies have the compute infrastructure and even the best technology stack available,” he said. “But it is important to recognize that MLOps is as much about people, processes and mindset as it is about technology.”

“It’s also more than just the continuous pipeline,” O’Brien reiterated. “It’s about the monitoring that ties into the governance of AI.”

Three points to remember

- As AI use in government missions expands rapidly, the need for trustworthy solutions is becoming more important than ever.

- A critical element of AI trust is the capability to govern the production lifecycle of ML models, called MLOps, analogous to DevOps in software development.

- Leidos practices an established MLOps approach designed to be repeatable, secure and scalable for government customers with AI mission applications.